How To Supercharge Your User Interface With Skia4Delphi

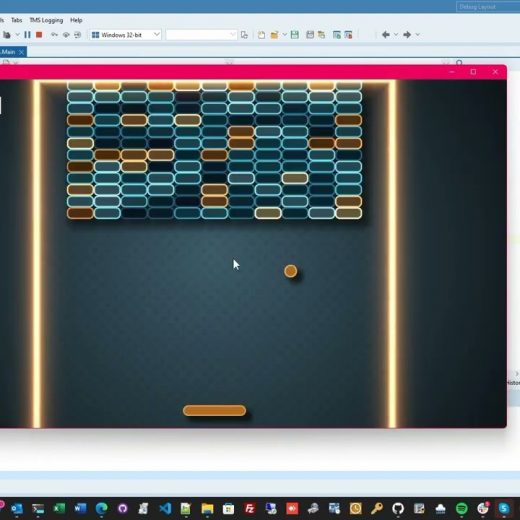

Skia is a very popular open-source 2D graphics library that provides common APIs that work across a variety of hardware and software platforms. It is interesting to note that is sponsored and managed by Google but it is available for use by anyone. In fact, it serves as the graphics engine for many web browsers today including Google Chrome, Chrome OS, Android, Mozilla Firefox, and Flutter to name a few. The product has been around since 2005 and it has become part of most windows application development. The same thing goes with VCL and FireMonkey cross platform apps. Thanks to the Barbosa brothers from Brazil, the brilliant minds behind the Skia4Delphi library (with the encouragement of Embarcadero’s Ian Barker and Jim McKeeth). They were also hailed as 2021 Delphi Award winners for their impressive work. What you should know about Skia4Delphi Skia4Delphi is a cross-platform 2D graphics API for Delphi platforms based on Google’s Skia Graphics Library. It is a free-to-access library that provides major components/controls like TSkLottieAnimation, TSkPaintBox, and TSkSvg. In this webinar, we will take a deep dive to explore this useful library and discover how this can supercharge the user interface of your apps. The webinar will also discuss why it is more beneficial to use Vector graphics (SVG) than JPEG and PNG image formats. SVG and Lottie Animations make it easy for the designer to create smooth and high-resolution user interface templates. The webinar will also provide us with some cool demos including some visually stunning Shaders and how this language (SkSL) can effectively level up your interface with its beautiful 3D animations. One of the cool things about the Skia Shader Language is it works across all platforms, and it doesn’t require OpenGL or any special drivers to be installed on the platform. Some of the demos in this webinar will also show what exactly Skia4Delphi is capable of. These samples include an interactive Brick game, a Star Trek data dashboard replica, and many other mobile demos showcasing Skia4Delphi’s full potential. We will also be introduced to Telegram Sticker Browser, a project that helps users browse Lottie animations and Telegram stickers more efficiently. To know more about the amazing Skia4Delphi library and how this can turbocharge your VCL and FMX apps, feel free to watch the Skia4Delphi webinar below. It is also interesting to note that Embarcadero Technologies is currently organizing a SKIA For Delphi Contest encouraging everyone to make their own GUI application using the Skia4Delphi features.